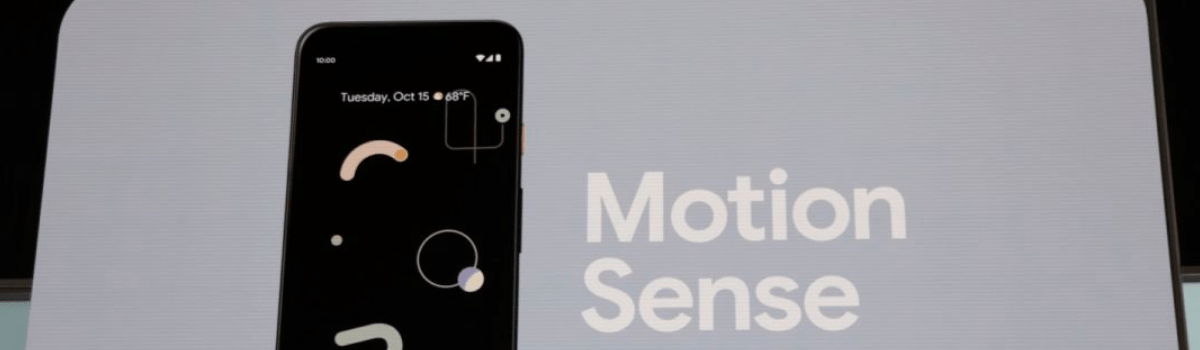

We’re in the second half of October and that means Google has announced its new hardware devices for the year. Among many new products, the Pixel 4 caught my attention and during the keynote, they focused on a new feature called Motion Sense. I would like to explain how it works and what you can do with it.

Android smartphones have had a feature similar to Motion Sense for years. I use the word “similar” very loosely here, though. Inside, there are some major differences between common “air gestures” for Android and what Motion Sense is capable of. But when looked at from an average user’s standpoint, I don’t think there is much of a difference.

I’m not trying to downplay what Google has done but at the end of the day, Motion Sense is a dressed-up version of air gestures.

What Can You Do with Motion Sense?

So before we dive in, it’s a good idea to get you up to speed with what you can do with the new Pixel 4 smartphones. Both the Pixel 4 and the Pixel 4 XL come with the same radar chip based on Google’s Project Soli technology. This is one of the contributing reasons why the new Google phones have a big forehead.

What better way to give you a brief example of what Motion Sense can do than to show you the recently uploaded video from Google. With it, I can break down what is happening in the video because you may not actually notice all of the small things. The first thing is even overlooked by many as the Pixel 4 “wakes up” when the person’s face gets close to it.

It won’t unlock just from this, but it will wake up so you don’t have to touch the device.

From there, it begins its new facial recognition feature and then fully unlocks. All of this happens without the person touching the smartphone. I’ll talk about how this works later, but I just thought it was an interesting thing that goes overlooked since it happens so early in the video.

We can hear the person is listening to music and then changes the track by waving their hand in the air. This is one of those air gestures that I mentioned earlier and it’s something that other OEMs have done in the past. The video wraps up with them doing a few more air gestures to show how easy it is to skip through music tracks.

Motion Sense Throughout the Android OS

The video above gives us an example of how Motion Sense can be used in a music application, but what about in other parts of a smartphone? I did mention how the Pixel 4 and Pixel 4 XL can wake up the device when it detects a face. So what else can you do with Motion Sense on the new Pixel smartphones?

Google is also building in support for Motion Sense in some of the core Android apps too. This results in you having the ability to wave your hand above the smartphone to snooze an alarm (I love this idea) or to simply silence an incoming phone call. You can even dismiss a timer without having to touch the screen.

However, Google says they don’t want to layer on a bunch of Motion Sense features just because. Instead, they would rather use it to improve “the emotional components of interaction.”

Although, I would like to point out that even if Google doesn’t want to pile on features using Motion Sense, I bet the developer community will. I suspect we’ll see something with Tasker and Motion Sense in the weeks/months to come.

How does Motion Sense Work?

This is where Motion Sense differs from the air gesture features we’ve seen other OEMs implement. Some will use the smartphone’s ambient sensor to detect said gestures while others will opt for using a ToF camera sensor instead. LG has even given its ToF sensor a name and calls it the “Z Camera.”

On the other hand, Google is using a radar chip to capture motion data. This comes from one of Google’s moonshot investments that were given the name Project Soli. The company first demonstrated what it could do with it back in 2015, but over the years they seemed to be nothing more than conceptual demonstrations.

Even the denim jacket they released in partnership with Levi’s felt uninspiring to me.

But having a radar chip on the front of a smartphone could actually be very useful. Since it is different than the other sensors that have been used for air gestures, it opens up the door for additional ways it can be used. The Pixel 4 waking up when a face gets closer to it is a great example of this.

Motion Sense Detects Presence

And the smartphone is able to do this because it creates a virtual bubble in front of the device. This only happens when it is “facing up or out” and can do this because it’s a radar chip and uses a tremendously small amount of battery to do so. We’re told it’s a hemisphere with a radius of about a foot or two.

When it detects you are around, it will allow the always-on display to work but will turn it off if it detects nothing is around.

Motion Sense Measures Distances

While it’s collecting that data on things surrounding the Pixel 4 and Pixel 4 XL, Motion Sense also enables it to measure the distance of objects it detects. That’s how it knows you’re wanting to unlock the phone and aren’t just looking at the information on the Lock Screen of the smartphone.

Google will use this data for various features but something that is highlighted is how the new smartphones will quickly turn on the screen when you go to reach for it (and then activate the facial recognition sensors). Or if an alarm/ringtone is playing and you go to reach for the smartphone then it will begin to lower to the volume of said audio.

Motion Sense Detects Quick Gestures

I’ve been calling these things Air Gestures throughout the article but Google looks to have named them Quick Gestures. This is going to be the user-facing features that you actively initiate with some sort of gesture. Right now, that’s navigating through the various tracks of your music application but it can be any of them.

I fully expect to see Google expanding the features of Motion Sense in the months (and possibly years) to come. It’s unclear if the company will stick with it or drop it based on low usage. That’s the thing with the air gestures, the gestures that are available aren’t that well known.

Either that, or it’s for something that isn’t used a lot. I can totally see people enjoying the small things Motion Sense brings (like lowering the audio), but I’m not yet convinced that we need a radar chip in a phone just yet. At least not one that compromises the design of a smartphone.